Securely Hosting Multiple Apps on a Single VPS

A Complete Production Grade Guide

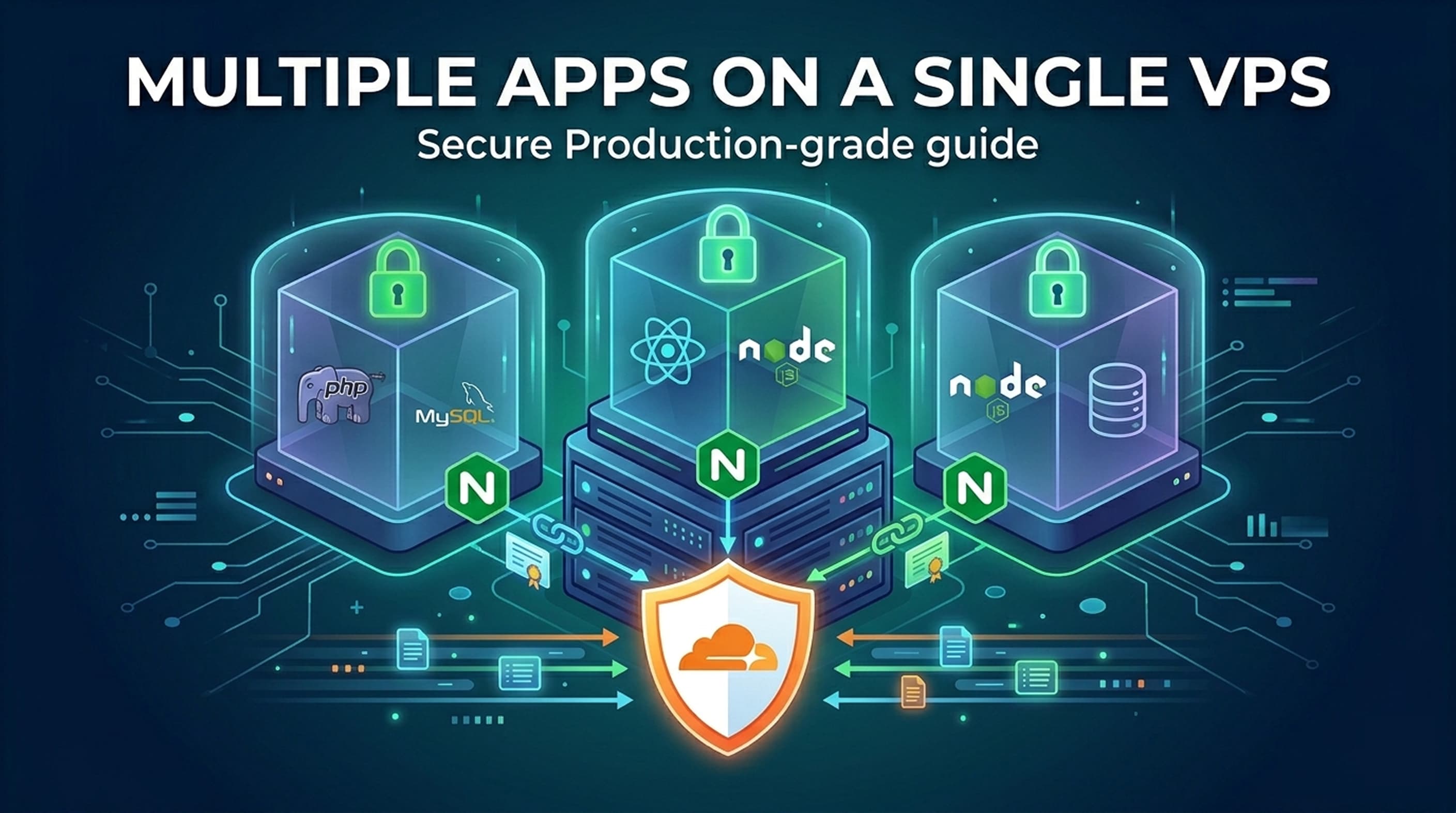

To host multiple applications on a single VPS, create a dedicated Linux user for each app, give each PHP application its own PHP-FPM pool with a unique socket, configure a separate Nginx server block per domain, and create one database user per app. Front the whole stack with Cloudflare in Full-strict mode.

When you run a fleet of small or moderately busy applications, e.g., a few Laravel/PHP APIs, a couple of Next.js frontends, or a Node.js worker, it rarely makes sense to spin up a dedicated server for each one. A single well-configured VPS (2-4 vCPU / 4 - 8 GB RAM is usually enough) can host a dozen of them comfortably, provided the isolation, process management, and security layers are done right.

This guide walks through that exact setup, end-to-end, on Ubuntu 22.04/24.04/26.04 LTS. The architecture we're building looks like this:

Every app runs as its own privileged Linux user, talks to its own PHP-FPM pool or Node process, and connects to its own database user. That gives you filesystem isolation, process isolation, and database isolation on a single machine.

Step 0: Before you begin: server hardening (DON'T skip this)

Before any application user is created, lock the box down. The single biggest mistake people make is creating app users after the server has already been exposed for hours with default settings.

#1. Update the system

sudo apt update && sudo apt upgrade -y

sudo apt install -y unattended-upgrades fail2ban ufw \

curl wget gnupg lsb-release \

ca-certificates software-properties-common

sudo dpkg-reconfigure -plow unattended-upgrades

Ubuntu's unattended-upgrades: https://ubuntu.com/server/docs/how-to/software/automatic-updates/

#2. Create a sudo admin user (not root, not the app users)

sudo adduser sysadmin

sudo usermod -aG sudo sysadmin

sudo mkdir -p /home/sysadmin/.ssh

sudo cp ~/.ssh/authorized_keys /home/sysadmin/.ssh/

sudo chown -R sysadmin:sysadmin /home/sysadmin/.ssh

sudo chmod 700 /home/sysadmin/.ssh

sudo chmod 600 /home/sysadmin/.ssh/authorized_keys

⚠️ Test the new user from a seperate terminal before locking yourself out:

ssh sysadmin@<your.server.ip>

#3. Disable password and root SSH login

Edit /etc/ssh/sshd_config

PermitRootLogin no

PasswordAuthentication no

PubkeyAuthentication yes

sudo systemctl restart ssh

⚠️ Caution: If you skip the SSH-key test step and disable password auth, you can lock yourself out. Always keep one logged-in session open until you've verified the new login works.

#4. Firewall

Cloudflare will be the only thing reaching ports 80/443. SSH should ideally be restricted to your IP, but at a minimum:

sudo ufw default deny incoming

sudo ufw default allow outgoing

sudo ufw allow OpenSSH

sudo ufw allow 80/tcp

sudo ufw allow 443/tcp

sudo ufw enable

For maximum lockdown, restrict 80/443 to Cloudflare's IP ranges only (more on that in the Cloudflare section).

#5. fail2ban

sudo systemctl enable --now fail2ban

The default jail covers SSH out of the box.

Step 1: Adding the application user

Each application gets its own Linux user and group. This is what makes the rest of the isolation possible; wrong file permissions inside one app cannot leak into another.

#1. Create the user (no password, no sudo)

sudo adduser --disabled-password --gecos "" <app_user_name>

eg, let's create an app user chotkari_api

sudo adduser --disabled-password --gecos "" chotkari_api

What this does:

--disabled-passwordcreates the account with a locked password field. Nobody cansuor SSH into it with a password — only via your sysadmin account or by deploying a key for the users~/.ssh/authorized_keysto SSH in directly (for developer or CI/CD.--gecos ""skips the interactive Full Name / Phone prompts.The user is not added to the

sudogroup. Do not runusermod -aG sudo chotkari_apiand do not editvisudofor them.

Verify:

sudo passwd -S chotkari_api

# chotkari_api L 04/05/2026 0 99999 7 -1 ← "L" means locked. Good ✅.

groups chotkari_api

# chotkari_api : chotkari_api ← only its own group. Good ✅.

#2. Set up the application directory

sudo mkdir -p /home/chotkari_api/app

sudo chown -R chotkari_api:chotkari_api /home/chotkari_api

sudo chmod 750 /home/chotkari_api

where chotkari_api is an app user name that we created earlier.

750 means: the user can do everything, the group can read/execute, and everyone else (including other app users) cannot even list the directory. This is the entire point.

#3. Allow nginx to read into the app

Nginx runs as the www-data user and needs to read static files (CSS, JS, images, and the Laravel public/ directory). Add www-data to the app's group, then make sure web-readable directories use group-read permissions:

sudo usermod -aG chotkari_api www-data

You'll log out and back in (or restart nginx) for that group membership to take effect.

ℹ️ Best practice: repeat the user-creation pattern for every app —

blog_api,dashboard_web,notifications_node, etc. Naming convention: lowercase, underscore-separated, suffixed with role (_api,_web,_worker). It pays off when you read logs at 2am.

Step 2: PHP installation and per-user FPM pools

The reason we give each app its own FPM pool is twofold: isolation (one app crashing or being exploited can't read another app's files because the pool runs as a different user) and resource control (you can cap CPU/memory per app).

#1. Install PHP and required extensions

sudo add-apt-repository ppa:ondrej/php -y

sudo apt update

sudo apt install -y \

php8.4-fpm php8.4-cli \

php8.4-mbstring php8.4-xml php8.4-curl php8.4-zip \

php8.4-bcmath php8.4-intl php8.4-gd \

php8.4-mysql php8.4-pgsql \

php8.4-redis php8.4-opcache

Pick the PHP version your apps actually require. If you have one app on 8.1 and another on 8.3, install both — they live in /etc/php/8.1/ and /etc/php/8.3/ side by side and can have separate pools.

#2. Disable the default pool

The default www.conf pool runs as www-data. Don't use it for any application:

sudo mv /etc/php/8.4/fpm/pool.d/www.conf \

/etc/php/8.4/fpm/pool.d/www.conf.disabled

#3. Create a per-app pool

Create /etc/php/8.4/fpm/pool.d/chotkari_api.conf:

[chotkari_api]

user = chotkari_api

group = chotkari_api

listen = /run/php/php8.4-fpm-chotkari_api.sock

listen.owner = www-data

listen.group = www-data

listen.mode = 0660

pm = dynamic

pm.max_children = 10

pm.start_servers = 2

pm.min_spare_servers = 1

pm.max_spare_servers = 3

pm.max_requests = 500

; Per-pool error log

php_admin_value[error_log] = /var/log/php-fpm/chotkari_api.error.log

php_admin_flag[log_errors] = on

; Per-pool resource limits

php_admin_value[memory_limit] = 256M

php_admin_value[upload_max_filesize] = 32M

php_admin_value[post_max_size] = 32M

; Security: only allow this app to access its own directory and tmp

php_admin_value[open_basedir] = /home/chotkari_api/app:/tmp:/var/lib/php/sessions

php_admin_value[disable_functions] = exec,passthru,shell_exec,system,proc_open,popen

Key things to notice:

The socket file is unique per app (

php8.4-fpm-chotkari_api.sock). Nginx will point each server block to the matching socket.listen.owner = www-datameans nginx (running aswww-data) can write to the socket, but the socket itself is owned at the filesystem level so only nginx and the FPM master can use it.pm.max_childrenis the most important tuning knob. A rough rule:max_children = available_RAM_for_PHP_in_MB / memory_limit. For 10 apps sharing 4 GB, give each ~400 MB → ~10 children at 256 MB peak (most won't peak at the same time).open_basediris the seatbelt. If your Laravel app is exploited and tries tofile_get_contents('/home/blog_api/.env'), PHP refuses.

Ref: https://www.php.net/manual/en/install.fpm.configuration.php#:~:text=List%20of%20pool%20directives

#4. Create the log directory

sudo mkdir -p /var/log/php-fpm

sudo chown www-data:www-data /var/log/php-fpm

#5. Reload FPM and verify the socket exists

sudo systemctl restart php8.4-fpm

ls -la /run/php/

# srw-rw---- 1 www-data www-data 0 May 4 10:22 php8.3-fpm-chotkari_api.sock

If the socket isn't there, check journalctl -u php8.4-fpm -n 50 — pool config errors are the usual culprit.

Step 3: Nginx — single instance, many sites, behind Cloudflare

Nginx will accept all traffic on 80/443, route by hostname, and either serve files / talk to PHP-FPM (for Laravel and PHP apps) or reverse-proxy to a Node process (for Next.js and Node APIs).

#1. Install

sudo apt install -y nginx

sudo systemctl enable --now nginx

#2. Cloudflare real-IP configuration (do this once, globally)

When Cloudflare proxies your traffic, every request arrives from a Cloudflare IP. If you don't tell nginx to trust Cloudflare and look at the CF-Connecting-IP header, your access logs, rate-limiting, and $remote_addr everywhere will all show Cloudflare's IPs instead of your real visitors.

Cloudflare IP list page: https://www.cloudflare.com/ips/

Create /etc/nginx/conf.d/cloudflare.conf:

# Cloudflare IPv4 ranges - keep this list updated from

# https://www.cloudflare.com/ips-v4

set_real_ip_from 173.245.48.0/20;

set_real_ip_from 103.21.244.0/22;

set_real_ip_from 103.22.200.0/22;

set_real_ip_from 103.31.4.0/22;

set_real_ip_from 141.101.64.0/18;

set_real_ip_from 108.162.192.0/18;

set_real_ip_from 190.93.240.0/20;

set_real_ip_from 188.114.96.0/20;

set_real_ip_from 197.234.240.0/22;

set_real_ip_from 198.41.128.0/17;

set_real_ip_from 162.158.0.0/15;

set_real_ip_from 104.16.0.0/13;

set_real_ip_from 104.24.0.0/14;

set_real_ip_from 172.64.0.0/13;

set_real_ip_from 131.0.72.0/22;

# IPv6

# https://www.cloudflare.com/ips-v6

set_real_ip_from 2400:cb00::/32;

set_real_ip_from 2606:4700::/32;

set_real_ip_from 2803:f800::/32;

set_real_ip_from 2405:b500::/32;

set_real_ip_from 2405:8100::/32;

set_real_ip_from 2a06:98c0::/29;

set_real_ip_from 2c0f:f248::/32;

real_ip_header CF-Connecting-IP;

real_ip_recursive on;

Cloudflare's IP list does change occasionally — automate this with a cron job pulling from https://www.cloudflare.com/ips-v4 and https://www.cloudflare.com/ips-v6 once a week.

Ref: https://nginx.org/en/docs/http/ngx_http_realip_module.html

#3. Tighten the firewall to Cloudflare only (recommended)

# Drop the broad allow-80/443

sudo ufw delete allow 80/tcp

sudo ufw delete allow 443/tcp

# Allow only Cloudflare ranges

for ip in $(curl -s https://www.cloudflare.com/ips-v4); do

sudo ufw allow from $ip to any port 80 proto tcp

sudo ufw allow from $ip to any port 443 proto tcp

done

sudo ufw reload

This means direct-to-IP traffic is dropped at the firewall; attackers can't bypass Cloudflare by hitting your origin IP if they discover it.

#4. Tune nginx.conf

Edit /etc/nginx/nginx.conf — change inside the http {} block:

worker_processes auto;

worker_rlimit_nofile 65535;

events {

worker_connections 4096;

multi_accept on;

}

http {

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

server_tokens off;

client_max_body_size 50M;

# Logging — use real client IP (set by the cloudflare.conf above)

log_format main '\(remote_addr - \)remote_user [$time_local] '

'"\(request" \)status $body_bytes_sent '

'"\(http_referer" "\)http_user_agent" '

'rt=\(request_time uct="\)upstream_connect_time" '

'urt="$upstream_response_time"';

access_log /var/log/nginx/access.log main;

error_log /var/log/nginx/error.log warn;

# Gzip

gzip on;

gzip_vary on;

gzip_min_length 1024;

gzip_types text/plain text/css application/json application/javascript

text/xml application/xml application/xml+rss text/javascript

image/svg+xml;

# Security headers (also set in Cloudflare; defence in depth)

add_header X-Content-Type-Options nosniff always;

add_header X-Frame-Options SAMEORIGIN always;

add_header Referrer-Policy strict-origin-when-cross-origin always;

include /etc/nginx/conf.d/*.conf;

include /etc/nginx/sites-enabled/*;

}

#5. Server block: a Laravel/PHP app

Create /etc/nginx/sites-available/api.chotkari.com.conf:

server {

listen 80;

listen [::]:80;

server_name api.chotkari.com;

root /home/chotkari_api/app/public;

index index.php index.html;

# Cloudflare terminates TLS; origin can stay HTTP behind the firewall,

# but we recommend origin TLS for "Full (strict)" mode — see Section 6.

access_log /var/log/nginx/chotkari_api.access.log main;

error_log /var/log/nginx/chotkari_api.error.log warn;

client_max_body_size 32M;

# Static assets — long cache, no PHP

location ~* \.(jpg|jpeg|png|gif|ico|css|js|svg|woff2?|ttf|eot)$ {

expires 30d;

add_header Cache-Control "public, no-transform";

try_files $uri =404;

}

location / {

try_files \(uri \)uri/ /index.php?$query_string;

}

location ~ \.php$ {

try_files $uri =404;

fastcgi_split_path_info ^(.+\.php)(/.+)$;

fastcgi_pass unix:/run/php/php8.4-fpm-chotkari_api.sock;

fastcgi_index index.php;

include fastcgi_params;

fastcgi_param SCRIPT_FILENAME \(document_root\)fastcgi_script_name;

fastcgi_param PATH_INFO $fastcgi_path_info;

fastcgi_param HTTPS on; # Cloudflare proxied — origin sees HTTP, but app should think HTTPS

fastcgi_param HTTP_X_FORWARDED_PROTO https;

}

# Block hidden files (e.g. .env, .git)

location ~ /\. {

deny all;

}

}

The two critical lines that tie everything together:

fastcgi_pass unix:/run/php/php8.4-fpm-chotkari_api.sock;— this is the per-user socket. Each Laravel app has its own. (nginx fastcgi_pass)root /home/chotkari_api/app/public;— Laravel'spublic/directory is the only thing webroot-exposed.

#6. Server block: a Next.js or Node app (reverse proxy)

Frontend frameworks like Next.js (when running as a Node server, not a static export) and any Node API server should run on a high local port and be reverse-proxied. Create /etc/nginx/sites-available/dashboard.chotkari.com.conf:

upstream dashboard_upstream {

server 127.0.0.1:3000;

keepalive 32;

}

server {

listen 80;

listen [::]:80;

server_name dashboard.chotkari.com;

access_log /var/log/nginx/dashboard.access.log main;

error_log /var/log/nginx/dashboard.error.log warn;

client_max_body_size 25M;

# Next.js static assets — let nginx serve them directly for speed

location /_next/static/ {

alias /home/dashboard_web/app/.next/static/;

expires 365d;

access_log off;

}

location / {

proxy_pass http://dashboard_upstream;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto https;

proxy_cache_bypass $http_upgrade;

proxy_read_timeout 60s;

}

}

#7. Enable each site

sudo ln -s /etc/nginx/sites-available/api.chotkari.com.conf /etc/nginx/sites-enabled/

sudo ln -s /etc/nginx/sites-available/dashboard.chotkari.com.conf /etc/nginx/sites-enabled/

sudo nginx -t

sudo systemctl reload nginx

⚠️ Caution: always run

nginx -tbefore reloading. A typo in anysites-enabledfile takes down every site. Reload (graceful) is preferred over restart.

Step 4: Database setup: separate user per app, never use root

The principle is identical to Linux users: one DB user per application, with privileges scoped to its own database only. If chotkari_api's database password ever leaks, the blast radius is one database, not all of them.

#1. Option A: PostgreSQL

sudo apt install -y postgresql postgresql-contrib

sudo systemctl enable --now postgresql

PostgreSQL's "root" is the postgres OS user. It uses peer authentication by default. sudo -u postgres psql works without a password. That's fine for administration; just never use it from an app.

sudo -u postgres psql

Inside psql:

-- Create app user with its own password

CREATE USER chotkari_api WITH PASSWORD 'a-strong-randomly-generated-password';

-- Create database owned by that user

CREATE DATABASE chotkari_api_db OWNER chotkari_api ENCODING 'UTF8';

-- Lock down: revoke public access to the new DB

REVOKE ALL ON DATABASE chotkari_api_db FROM PUBLIC;

GRANT ALL PRIVILEGES ON DATABASE chotkari_api_db TO chotkari_api;

\q

Configure pg_hba.conf (/etc/postgresql/16/main/pg_hba.conf) so the app connects via password over a local socket:

# TYPE DATABASE USER ADDRESS METHOD

local all postgres peer

local chotkari_api_db chotkari_api scram-sha-256

host chotkari_api_db chotkari_api 127.0.0.1/32 scram-sha-256

sudo systemctl reload postgresql

In Laravel's .env:

DB_CONNECTION=pgsql

DB_HOST=127.0.0.1

DB_PORT=5432

DB_DATABASE=chotkari_api_db

DB_USERNAME=chotkari_api

DB_PASSWORD=a-strong-randomly-generated-password

#2. Option B: MySQL / MariaDB

sudo apt install -y mysql-server

sudo mysql_secure_installation

The interactive script asks you to set the root password, remove anonymous users, disallow remote root login, and remove the test database. Say yes to all of those.

Then create the app user and database:

sudo mysql -u root -p

CREATE DATABASE chotkari_api_db CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci;

CREATE USER 'chotkari_api'@'localhost' IDENTIFIED BY 'a-strong-randomly-generated-password';

GRANT ALL PRIVILEGES ON chotkari_api_db.* TO 'chotkari_api'@'localhost';

FLUSH PRIVILEGES;

EXIT;

Notice the privilege scope: chotkari_api_db.*, not *.*. The user has zero visibility into any other database.

ℹ️ Best practice: generate passwords with

openssl rand -base64 32and store them only in the app's.envfile (which lives at/home/chotkari_api/app/.envand is ownedchotkari_api:chotkari_apiwith mode600). Never commit them to git, never put them in shell history (usemysql_config_editoror read from a file).

#3. Can PostgreSQL and MySQL run on a single machine?

Yes, you can run PostgreSQL and MySQL on the same machine if different apps need different engines. They listen on different ports (5432 and 3306) and don't conflict. Just budget RAM accordingly; both have non-trivial memory footprints when active.

Step 5: Deploying the actual applications

#1. Laravel / PHP app

Ref: https://laravel.com/docs/master/deployment

SSH into the app user and pull the code:

In local terminal:

ssh chotkari_api@<server-ip>

After ssh (in remote shell):

git clone git@github.com:yourorg/chotkari-api.git app

cd app

composer install --no-dev --optimize-autoloader

cp .env.example .env

# fill in DB credentials, app key, etc.

php artisan key:generate

php artisan migrate --force

php artisan config:cache

php artisan route:cache

php artisan view:cache

exit

Permissions for Laravel's writable directories:

sudo chown -R chotkari_api:chotkari_api /home/chotkari_api/app

sudo chmod -R 775 /home/chotkari_api/app/storage

sudo chmod -R 775 /home/chotkari_api/app/bootstrap/cache

sudo chmod 600 /home/chotkari_api/app/.env

For Laravel's queue worker and scheduler, use systemd rather than nohup or screen:

/etc/systemd/system/chotkari_api-queue.service:

[Unit]

Description=Laravel queue worker for chotkari_api

After=network.target

[Service]

User=chotkari_api

Group=chotkari_api

Restart=always

RestartSec=3

WorkingDirectory=/home/chotkari_api/app

ExecStart=/usr/bin/php artisan queue:work --sleep=3 --tries=3 --max-time=3600

StandardOutput=append:/home/chotkari_api/app/storage/logs/queue.log

StandardError=append:/home/chotkari_api/app/storage/logs/queue.log

[Install]

WantedBy=multi-user.target

sudo systemctl daemon-reload

sudo systemctl enable --now chotkari_api-queue.service

For the scheduler, a single root-owned cron entry calling Laravel's scheduler as the app user is cleanest:

sudo crontab -e

* * * * * sudo -u chotkari_api /usr/bin/php /home/chotkari_api/app/artisan schedule:run >> /dev/null 2>&1

#2. Next.js (Node-rendered) app

Install Node.js via NodeSource:

curl -fsSL https://deb.nodesource.com/setup_20.x | sudo -E bash -

sudo apt install -y nodejs

Deploy as the app user:

ssh chotkari_dashboard@<server-ip-address>

git clone git@github.com:yourorg/dashboard.git app

cd app

npm ci

npm run build

exit

Run with systemd. /etc/systemd/system/chotkari_dashboard.service:

[Unit]

Description=Next.js dashboard

After=network.target

[Service]

User=chotkari_dashboard

Group=chotkari_dashboard

WorkingDirectory=/home/chotkari_dashboard/app

Environment=NODE_ENV=production

Environment=PORT=3000

ExecStart=/usr/bin/node_modules/.bin/next start -p 3000

# (or: ExecStart=/usr/bin/npm start)

Restart=always

RestartSec=3

StandardOutput=append:/home/chotkari_dashboard/app.log

StandardError=append:/home/chotkari_dashboard/app.log

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

For purely static Next.js exports (next export) or static React builds, skip the Node service entirely — just point nginx's root at the out/ or build/ directory and serve as static files.

#3. Pure Node.js API

Same pattern as Next.js. If you prefer PM2 over systemd because the dev team is more familiar with it, that's fine; but install PM2 globally as the app user, not as root, and use pm2 startup systemd so it survives reboots. Most teams I've worked with end up preferring systemd for production: it's already there, no extra dependency, and journalctl logs are unified with the rest of the system.

#4. Static React / Vite frontend

The simplest of all. Build, copy dist/ or build/ to the app user's home, point nginx at it:

server {

listen 80;

server_name app.chotkari.com;

root /home/chotkari_web/app/dist;

index index.html;

location / {

try_files \(uri \)uri/ /index.html; # SPA fallback

}

location ~* \.(js|css|woff2?|svg|png|jpg|webp)$ {

expires 1y;

add_header Cache-Control "public, immutable";

}

}

No PHP-FPM, no Node process, just static files.

Bonus: Cloudflare configuration

In the Cloudflare dashboard for each domain:

#1. DNS tab:

Create an A record pointing the app subdomain (e.g. api.chotkari.com) to your server's IPv4 address. Click the cloud icon so it's orange (proxied), not grey.

#2. SSL/TLS tab:

Set encryption mode to Full (strict). "Flexible" is dangerous; it encrypts user→Cloudflare but leaves Cloudflare→origin in plaintext, and the app sees

HTTPS=offcausing all sorts of broken redirects.Generate an Origin Certificate (Cloudflare → SSL/TLS → Origin Server → Create Certificate). Cloudflare gives you a cert + key valid for 15 years, which only Cloudflare's edge will trust. Install on the origin:

sudo mkdir -p /etc/ssl/cloudflare

sudo nano /etc/ssl/cloudflare/api.chotkari.com.pem # paste the cert

sudo nano /etc/ssl/cloudflare/api.chotkari.com.key # paste the key

sudo chmod 600 /etc/ssl/cloudflare/*.key

Then update each nginx server block to listen on 443 with that cert:

server {

listen 443 ssl http2;

listen [::]:443 ssl http2;

server_name api.chotkari.com;

ssl_certificate /etc/ssl/cloudflare/api.chotkari.com.pem;

ssl_certificate_key /etc/ssl/cloudflare/api.chotkari.com.key;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_prefer_server_ciphers on;

# ... rest of config (root, locations, etc.)

}

# Redirect HTTP to HTTPS

server {

listen 80;

server_name api.chotkari.com;

return 301 https://\(host\)request_uri;

}

- Enable Authenticated Origin Pulls so nginx will only accept requests carrying Cloudflare's client certificate. This is the strongest origin protection — even if your IP leaks, anyone hitting it directly gets handshake-rejected.

#3. Speed / Caching tab:

Enable Brotli, Auto Minify (HTML/CSS/JS), and set Caching → Browser Cache TTL to a sensible value (4 hours is a good default).

#4. Security tab:

Enable Bot Fight Mode (free tier) and set Security Level to Medium. Add WAF custom rules if you have public APIs that should be rate-limited.

Bonus: Pieces I haven't talked about yet (but you'll need)

#1. Log rotation

Your per-pool PHP logs and per-site nginx logs will grow. Add /etc/logrotate.d/php-fpm-apps:

/var/log/php-fpm/*.log {

daily

rotate 14

compress

delaycompress

missingok

notifempty

create 0640 www-data www-data

postrotate

systemctl reload php8.4-fpm > /dev/null

endscript

}

#2. Backups

At minimum, nightly database dumps to a remote location (S3, Backblaze B2, another VPS):

# /usr/local/bin/backup-databases.sh, run by cron at 03:00

#!/bin/bash

set -e

DATE=$(date +%Y-%m-%d)

DEST=/var/backups/db/$DATE

mkdir -p "$DEST"

# PostgreSQL — dump every database except templates

sudo -u postgres pg_dumpall --clean --if-exists | gzip > "$DEST/pg_all.sql.gz"

# MySQL — every database

mysqldump --defaults-file=/root/.my.cnf --all-databases --single-transaction --routines --triggers | gzip > "$DEST/mysql_all.sql.gz"

# Sync offsite

rclone sync /var/backups/db remote:my-backups/db/

# Keep only last 7 days locally

find /var/backups/db -maxdepth 1 -type d -mtime +7 -exec rm -rf {} \;

#3. Test your restores

A backup that has never been restored is just hope.

#4. Monitoring

Install netdata (free, real-time dashboards) or use Cloudflare's free analytics + UptimeRobot for external probing. At minimum, you want alerts for: disk > 80%, memory > 90% sustained, any service in a failed state, any 5xx rate spike.

#5. Deployment automation

For each app, set up a deploy user (chotkari_api itself works) with a deploy SSH key authorized in your CI. A minimal GitHub Actions deploy step:

- uses: appleboy/ssh-action@v1

with:

host: ${{ secrets.SERVER_HOST }}

username: chotkari_api

key: ${{ secrets.DEPLOY_KEY }}

script: |

cd ~/app

git pull

composer install --no-dev --optimize-autoloader

php artisan migrate --force

php artisan config:cache

sudo systemctl reload php8.4-fpm

sudo systemctl reload php8.4-fpm requires a narrow sudoers rule — see below — since the app user otherwise has no sudo:

chotkari_api ALL=(root) NOPASSWD: /bin/systemctl reload php8.4-fpm

This is the one sudoers exception, scoped to a single command. It does not violate the "no sudo for app users" rule in spirit because the user can do exactly one thing as root: reload its own runtime.

#6. Zero-downtime deployment

For Node/Next.js, use systemd's ExecReload with a SIGHUP-aware server, or run two instances on adjacent ports behind nginx upstream and swap. For Laravel, php artisan down + deploy + php artisan up is fine for low-traffic apps; for higher traffic, atomic symlink switching (releases/ directory pattern / Laravel Forge / Deployer / Capistrano).

#7. Resource isolation upgrade path

If one app starts hogging CPU and starving the others, the next step up from per-pool tuning is systemd cgroups slices. You can drop a Slice=chotkari_api.slice into the systemd unit, define limits in /etc/systemd/system/chotkari_api.slice, and cap CPU/memory hard. Same for PHP-FPM pools — modern systemd lets you put the FPM master under per-app slices too.

If you outgrow that, the next step is Docker / Podman per app, and beyond that, splitting onto multiple servers. But for the "many lightweight apps on one machine" use case this guide targets, you usually never need to go that far.

Quick mental checklist before you call it done

Every app has its own Linux user, no password, not in sudoers.

Every PHP app has its own FPM pool with its own socket and

open_basedir.Every nginx server block points to the right socket / upstream and has its own access/error log.

Every database has its own user with privileges scoped to that database only.

UFW is restricted to Cloudflare ranges + your SSH IP; root SSH and password SSH disabled.

Cloudflare is in Full (strict) mode with an origin certificate and Authenticated Origin Pulls.

nginx receives the real client IP via

CF-Connecting-IP.Backups run nightly, are sent offsite, and have been test-restored at least once.

Logs rotate. Disk usage is monitored.

Prepare a documented runbook for "how do I deploy app X" and "how do I restore database Y"; even if it's just a note in your password manager.

Get those ten right, and you have a server that's secure, debuggable, and capable of carrying a real production workload for years.

If you have any questions, confusions, suggestions, or corrections, please do comment. Thank you!